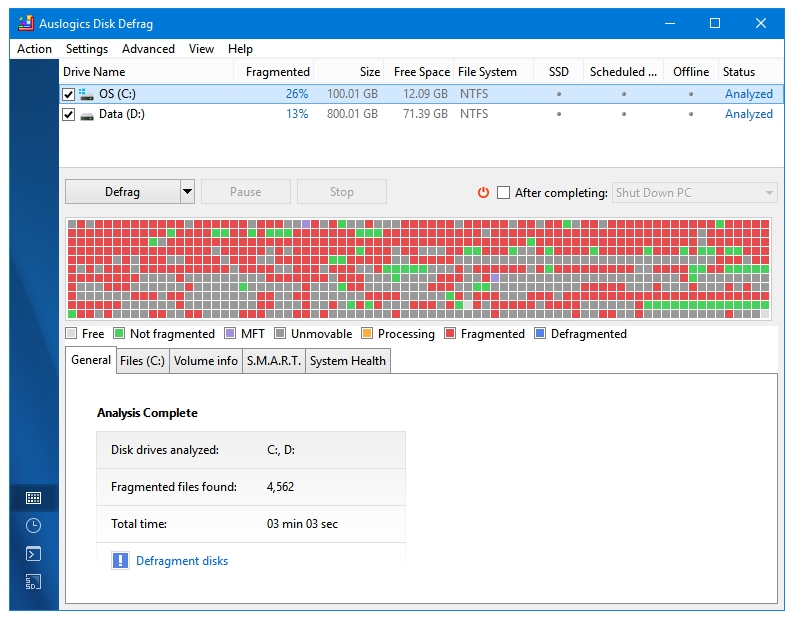

Heavily fragmented determined by what method? And what's the threshold (the point at which you consider it needing defragmenting?) The idea here is that some compression was lost during the defrag which may be regained via compress-force=lzo? Do I have to run defrag again, or should I balance in this case? Or for some reason it didn't compress the data, because it erroneously detected them as incompressible Sure output of sudo btrfs fi usage /data is (note: I've deleted some files in-between ncdu now reports 35.1 TB which is 0.9TB less than Used below): Overall:ĭata,RAID10: Size:36.83TiB, Used:36.00TiB (97.74%) Sudo btrfs subvolume list /data returns an empty list.Ĭat /proc/mounts | grep /data results in: /dev/sdd /data btrfs rw,noatime,compress=lzo,space_cache,autodefrag,subvolid=5,subvol=/ 0 0Īny help towards regaining some of the lost free space is much appreciated. I've checked with sudo find /data/ -type f -links 1 -print | less that there are no unexpected duplicates of files. (Note the delta between used=36.84 and what ncdu reports.

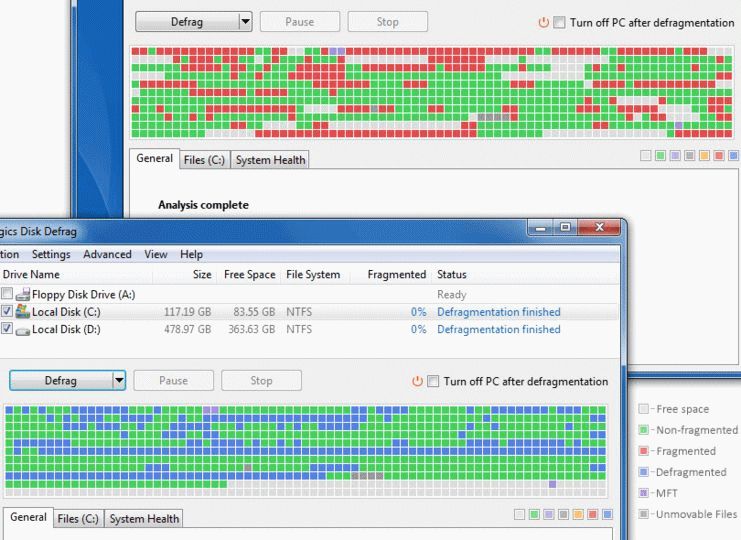

GlobalReserve, single: total=512.00MiB, used=0.00B Running sudo ncdu -x /data results in: Total disk usage: 35.9 TiB Apparent size: 35.9 TiB Items: 37728ĭata, RAID10: total=36.85TiB, used=36.84TiB This did not seem to help, as only the ratio of total / used shrank. So I tried sudo btrfs balance start /data/ (full balance), and also restarted some containers with references into /data while the rebalance was running. After the defrag had finished, ~1TB of free space had vanished. While the defrag was happening, the free space was incrementally decreasing.

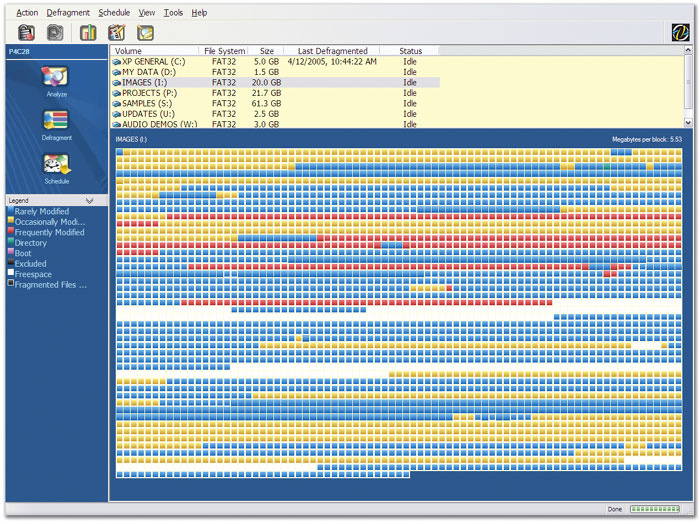

My files where heavily fragmented so I ran an online sudo btrfs fi defrag -v -r /data/.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed